Riiiight so both Corbyn and Angela Eagle can run for Labour Leader, so the election’s on. Labour’s turn to agonise about runners, riders, the gap between party members, the MPs who they volunteer for, and the public who might (or might not) reward them with power. This time round the stakes couldn’t be higher, with a Brexit to influence, likely Scottish independence, and possible general election. Labour’s problems will be acute and more so than the Tories (their spin machine has somehow turned their own fortnight of indecision and ineptitude into an object lesson in ruthlessness… amazing.) This is because Labour’s problems – shifting support bases, policy fracture points, and the large and apparently increasing disconnects between voters, MPs, and a large membership* – are deeper, older, and until recently less-discussed than the Tories’ main weak points on Europe and social liberalism. Here’s my tuppence on this, all collected in one place, in no real order of logic/emphasis:

1) This isn’t the 80s. It isn’t a re-run of Militant, a long-term plan of an external party; Corbyn (at the last election) got a massive majority of Labour Members and Labour Registered Supporters.

2) This isn’t the 90s, or even the Noughties, either. Two-party politics in the UK is dead, dead, dead. Not least because the UK itself as a political entity is heading for the dustbin faster than Cameron’s ‘DC – UK PM’ business cards.

3) It isn’t a ‘Corbyn cult’. Lots of people, me included switched onto Corbyn because of his policies, not the other way round.

4) That ‘7, 7-and-a-half’ performance. Watch the full interview: Did Corbyn bang the EU drum as loudly and clearly as Cameron, Brown, Blair and Major? No. He articulated a nuanced, balanced, fact-based opinion (essentially “I’m broadly in favour of the EU and many of the benefits, but we shouldn’t ignore the problems”) in the way that we kept being told other campaigners weren’t doing – and got castigated for it. Naïve? He’s been into politics for 40-odd years. I think he was just doing what he was elected for, saying what he believes the truth to be, simply.

5) Will Corbyn ever be PM? I personally don’t think so. But the future of UK politics is probably coalitions – see (2) above – so the views he’s highlighted and continues to champion will be (I believe) represented by a coalition or leftist grouping in power.

6) If the problem is style, Angela Eagle isn’t the answer. A bit more centrist… a bit more electable… a bit less weird… a bit less Marxist… sure. But if the problem is that Corbyn isn’t enough like Blair, Eagle isn’t the answer. PM May will utterly decimate her, in seconds.

7) The policies are belters: Renationalising railways; reversing NHS privatisation; A Brexit that prioritises EEA access and freedom of movement over border control; a genuine national living wage, properly enforced; scrapping tuition fees and expanding apprenticeships; environmental protection; socially liberal. Fair personal, corporate, financial and ecological taxation to pay for it. These are all massive and proven winners amongst (variously) from 50+ to 85% of the electorate.

8) Trident is a red herring. Forget it. I’m not sure how I feel about unilateral disarmament personally. But given he’s unlikely to be a majority PM (see above) Corbyn’s stance on nuclear weapons doesn’t really rate in importance for me compared to Brexit, the NHS and taxation. In any case the lifespan of the current system can be extended to postpone that discussion past 2020 – the MoD are actually quite good at kicking stuff like this into the long grass for a bit. By then the left (hard- and centre-) can get themselves into shape for that debate. Dividing ourselves now over something the right are utterly united on, and a clear majority of the public support, is madness (Corbyn even acknowledged so with his defence review). There’s much better reasons to argue…

9) Honest votes are more powerful. By that I mean both that the recent UK referendums showed – to every single person in the country better than a lecture ever could – that first-past-the-post is rotten**, that tactical voting or second-guessing your fellow electors is stupid, dangerous and counterproductive, and that (shock, horror) voting for something you believe in is an energising and rewarding experience in its own right. This is also true of leadership elections, don’t forget; how many Tories egging on Boris now wished they hadn’t? Or backed Gove instead of Leadsom? And lastly…

10) The ‘split risk’…

Tensions in what used to be a millions-strong Labour movement between left-behind poor and optimistic urbanites have become unendurable. They might not lead to a party split (although the press have started to publicly contemplate what lots of us have been saying for a year, or more) but equally might. Should you vote for Corbyn if you want a split, or if you want unity? It’s impossible to know, so see (9) above and vote for the person/policies you prefer.

As to the desirability of a split, well, tensions are often resolved by fractures. There need not be a SDP-type irrelevance created – the political landscape is completely different now, with smaller parties proven and established, and many more proportional elections apart from Westminster in play. More importantly, figures in the Greens, Lib Dems and Labour have already spoken overtly in the press about the need for a new, broad centre-left coalition, which both Labour descendent parties could contribute to without antagonising each other’s supporters. Probably more happily and successfully!

It’s also important to remember that the ‘unite and fight’ ethos that animates the Labour Party – which (especially) Blairite PLP are mobilising to justify opposition to Corbyn, disingenuously I feel – predates the Labour Party by a century or more. Recall the Diggers, Levellers, Abolitionists, various religious groups, Socialists, Trade Unionists… lefty-ness has always been necessarily a big tent, but which poles are placed firmly in the earth and the strength of the storm define how big the canvas is. Progressive movements which want to redistribute power and wealth from the self-protecting, actual, ever-present, and very real, ruling class can’t always be populist, and span acres of political ground, and find expression in a single monolithic electoral party. Sometimes two, but rarely three of those. If it’s time to move the poles around to firmer ground, we should.

So that’s my take. If you can vote, I hope you do…

*However much the PLP might want to, they can’t ignore the fact that a progressive party needs vast numbers of volunteers in their millions, far more than a right-wing party which can afford paid helpers. Substituting volunteers for better fundraising adverts and more millionaire backers is a symptom of the root cause, not a solution.

**Imagine how different our democracy would look if we’d had compulsory voting for the last 20 years, with county-wide party votes used to fill a proportionally-elected House of Lords. How much healthier would we be, then?

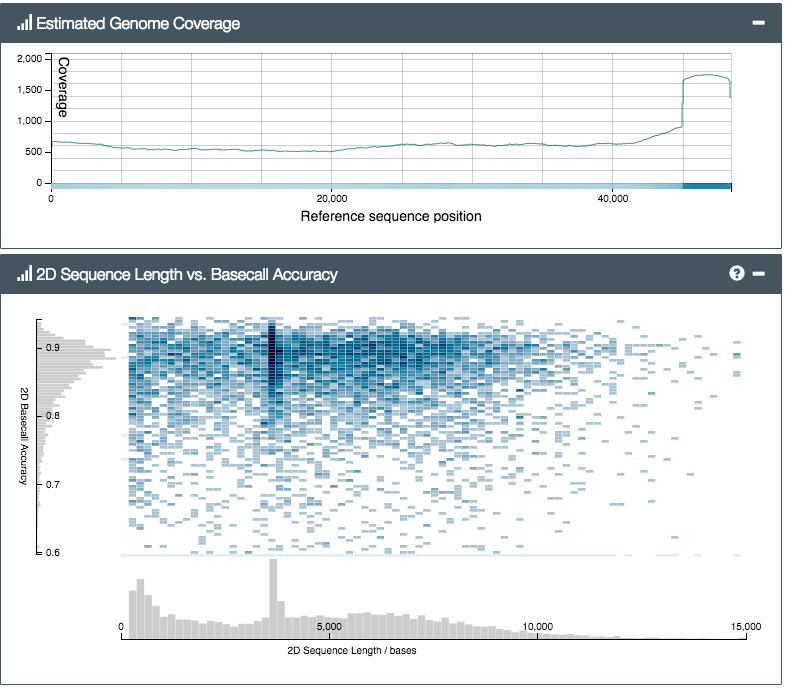

First up, the size. The pictures don’t really do it justice… compared with existing machines (fridge-sized, often) the MinION isn’t small, it’s tiny. The thing itself, plus box and cable, would easily fit into a side pocket on any laptop bag. So the ‘portable’ bit is certainly true, as far as the platform itself is concerned. But there’s a catch – to prepare a biological sample for sequencing on the MinION, you first have to go through a fairly complicated set of lab steps. Known collectively as ‘library preparation’ (a library here meaning a test tube containing a set of DNA molecules that have been specially treated to prepare them to be sequenced), the existing lab protocol took us several hours the first time. Partly that was due to the sheer number of curious onlookers, but partly because some of the requisite steps (bead cleanups etc) just need time and concentration to get right. None of the steps is particularly complicated (I’m crap at labwork and just about followed along) but there’s quite a few, and you have to follow the steps in the protocol carefully.

First up, the size. The pictures don’t really do it justice… compared with existing machines (fridge-sized, often) the MinION isn’t small, it’s tiny. The thing itself, plus box and cable, would easily fit into a side pocket on any laptop bag. So the ‘portable’ bit is certainly true, as far as the platform itself is concerned. But there’s a catch – to prepare a biological sample for sequencing on the MinION, you first have to go through a fairly complicated set of lab steps. Known collectively as ‘library preparation’ (a library here meaning a test tube containing a set of DNA molecules that have been specially treated to prepare them to be sequenced), the existing lab protocol took us several hours the first time. Partly that was due to the sheer number of curious onlookers, but partly because some of the requisite steps (bead cleanups etc) just need time and concentration to get right. None of the steps is particularly complicated (I’m crap at labwork and just about followed along) but there’s quite a few, and you have to follow the steps in the protocol carefully.